Stop locking estimates, start forecasting

Failing to meet timelines is often caused by false expectations

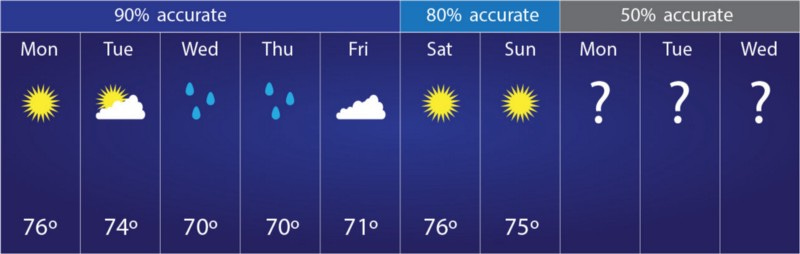

Most weather forecasts don’t match reality. Especially weather forecasts that are provided more than a week in the future.

Yet despite how often weather forecast are wrong, we still pay attention to them. Weatherwomen and weathermen all over the world tell us the weather every day, are often wrong, and still we keep listening. We know being wrong comes with the job.

We grant the weatherman the luxury to update his forecast as more information becomes available. Why do we often not grant our software development teams this same luxury?

What can we learn from weather forecasting?

When forecasting the weather, the accuracy of the weather forecast drops to around 50% when you try to predict the weather more than 7 days in advance. The closer you get to the date you’re trying to forecast, the better the information you will have to make a prediction. As a result, the closer you are to the date of interest, the more accurate your forecast will be.

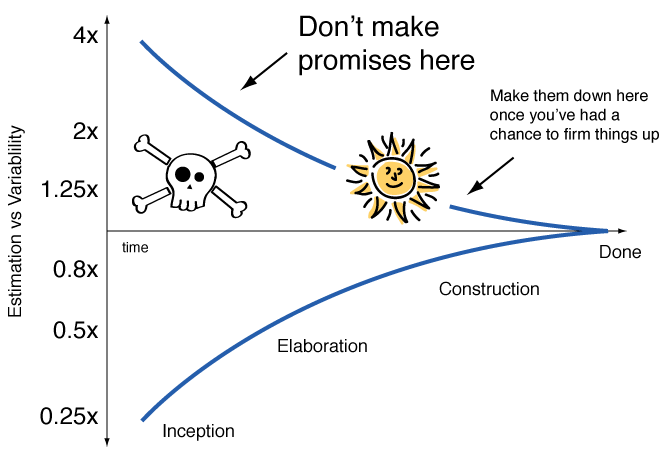

In software estimation, the same thing happens. This phenomenon is called the cone of uncertainty:

The cone of uncertainty shows that before starting we are clueless about what truly matters and hence our estimates are often significantly off. As we learn and know more by doing the work, we will be able to provide better estimates.

Except in software development we’re often less savvy than the weatherman. We often fear we will be criticized if our forecasts are wrong. We foolishly stick to our initial estimates, as if adjusting them means admitting we don’t know what we’re doing.

Why do we treat it as a failure if predictions are adjusted as more information becomes available? What if we do know what we’re doing, but we just don’t know what we need to know until we start doing?

What should we do differently when talking about estimates?

The trick is to forecast timelines every two weeks and explain the cone of uncertainty to your stakeholders. The best place to do this is the Sprint Review.

During the Sprint Review stress that presented timelines are based on the currently available information and it’s not a deadline or a promise. As we get closer to the forecasted timeline our estimates will become more accurate.

You can explain this by saying your forecasts are like the weather forecast: the more in the future the weather forecast is, the more inaccurate it will be. The closer you get to the date, the more accurate it will be.

Unfortunately many teams do not treat the timelines in their plans like weather forecasts. Updating or moving timelines when you have more information is seen as a failure. This is the wrong way of dealing with the problem of inaccurate forecasts. It’s the ‘putting your head in the sand’ approach to forecasting.

Prediction is valuable, but adaptation as more information becomes available is more valuable. Adjusting your timelines when you have more information should be seen as a success. You’re leveraging the latest and best information at your disposal. You’re learning, and that’s something different than failing. Learning means doing something better than before. You can’t do that if you stick to the original plan.

The moment you make changes in the forecast, you are transparent about this with your stakeholders and explain why. You can even give stakeholders the option to pull the plug on that feature if they are not happy with the amount of time you are spending compared to the initial estimate.

Often when you start working on something new it’s the beginning that is the most uncertain. Once you have a foundation, adding new features on top of it usually becomes way more predictable. After you finished the small chunk of work you can usually provide a way more accurate forecast of the larger chunk it belongs to.

I do not present naked estimates of the Development Team to stakeholders. I work together with the team to make a forecast based on their estimates. Once we agree on a feasible forecast, it is sensible to add a lot of scheduling buffer on top.

Doubling estimates to arrive at a forecast will by no means prevent timelines from not being met. As it is written in Hofstadter’s law:

It always takes longer than you expect, even when you take into account t— Hofstadter’s Law

Expanding forecasts just prevents a lot of initial disappointment and back-and-forth after you start working on something. It gives you wiggle room for the unexpected, which is to be expected. Give a wide time range estimate in the beginning and narrow it down as the cone of uncertainty becomes slimmer.

Worry less about timelines and more about value

We love talking about timelines, but we should actually be far more concerned about the value we are delivering. Everything I told about uncertainty in timelines, applies just as much to the delivery of value. Before we work on something, uncertainty if something is valuable is highest.

Experiments and validation are necessary to make sure you are delivering value. Treat everything you build as if it isn’t valuable, until you gain evidence and confidence of the opposite.You don’t deliver value by just doing, you deliver value by doing and learning.

It’s not about what you build, but how it is received. To understand how what you build is received, you need to listen and collect feedback. Learning, by definition, is more messy and unpredictable than doing. You need to act in the moment, based on the latest and best information, to seize the opportunity as it is presented.

Value is delivered by taking initiative, not by acting based on the assumption that your plans are perfect from the start. Prediction is nice, but adaptation is better. Adjusting your course based on what you learned, means what you’re building is rooted in reality instead of just a product of your imagination.