The Cannibalization of Competence - Part II: Rocket Fuel In Rusty Land Mowers

In part I we explored what kind of organizations won’t benefit from AI.

👋 Digging the content? Let’s talk Product☕. I’m available for Fractional Product Management, Workshops, Coaching, and Speaking.

To quickly recap part I:

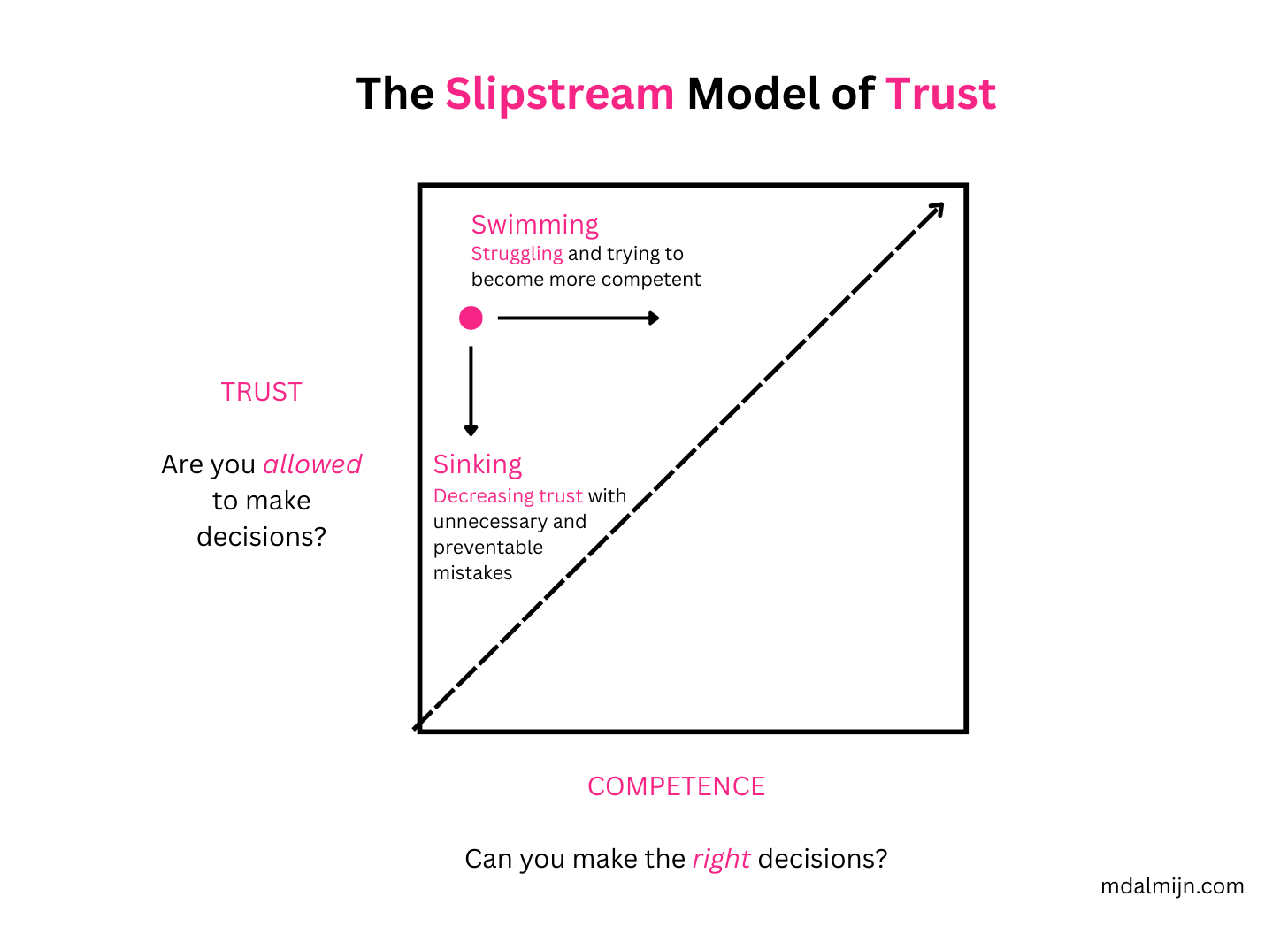

If you want leverage all the potential of your employees, then they should enjoy more Trust than their level of Competence.

Most organizations Trustcap their employees, which means employees get less Trust than their level of Competence. Trustcapping results in reduced Competence and reduced Agency - the ability to influence results with your decisions and actions - and may even cause people to begin under-performing.

When you’re Trustcapping your employees, your organization is like a dinosaur waiting for the meteor to strike in the age of AI. If your employees can’t exercise the Competence they already have without AI, then increasing their Competence with AI won’t make a difference.

Upskilling your employees with AI when you’re Trustcapping them is like trying to put rocket fuel in a rusty old land mower: it won’t change a damn thing.

If you’re in a situation of Trustcapping with low Agency and the Cannibalization of Competence going on, what should you be doing instead of heavily investing in AI?

Let’s answer this question by examining what kind of organizations will benefit from AI.

What Kind of Organizations Will Benefit From AI?

Let’s talk about what kind of organizations will benefit the most using a real-world example from my personal experience.

Imagine you join a company where the level of Trust is significantly greater than your level Competence. Let’s assume you’re extremely inexperienced and low in Competence, but you’re joining a start-up that already possesses a high Trust environment.

That’s exactly what happened to me when I landed my first job as a Product Manager.

If you join a company where you enjoy a much higher level of Trust than your Competence then you’re in a Sink or Swim situation.

Just like you shouldn’t throw a kid in a swimming pool when they can’t swim, it’s not the best idea to put a new employee in a sink or swim situation.

I was working overtime for many months and toiling away to grow my level of Competence. Because I was trusted to make many decisions I was incapable of making, I was accumulating many mistakes resulting in diminished Trust. I was not only Swimming, I was also Sinking. I was struggling and I believed I didn’t do a good job.

It was the only job I had where I wondered why they didn’t fire me, as I really was in over my head.

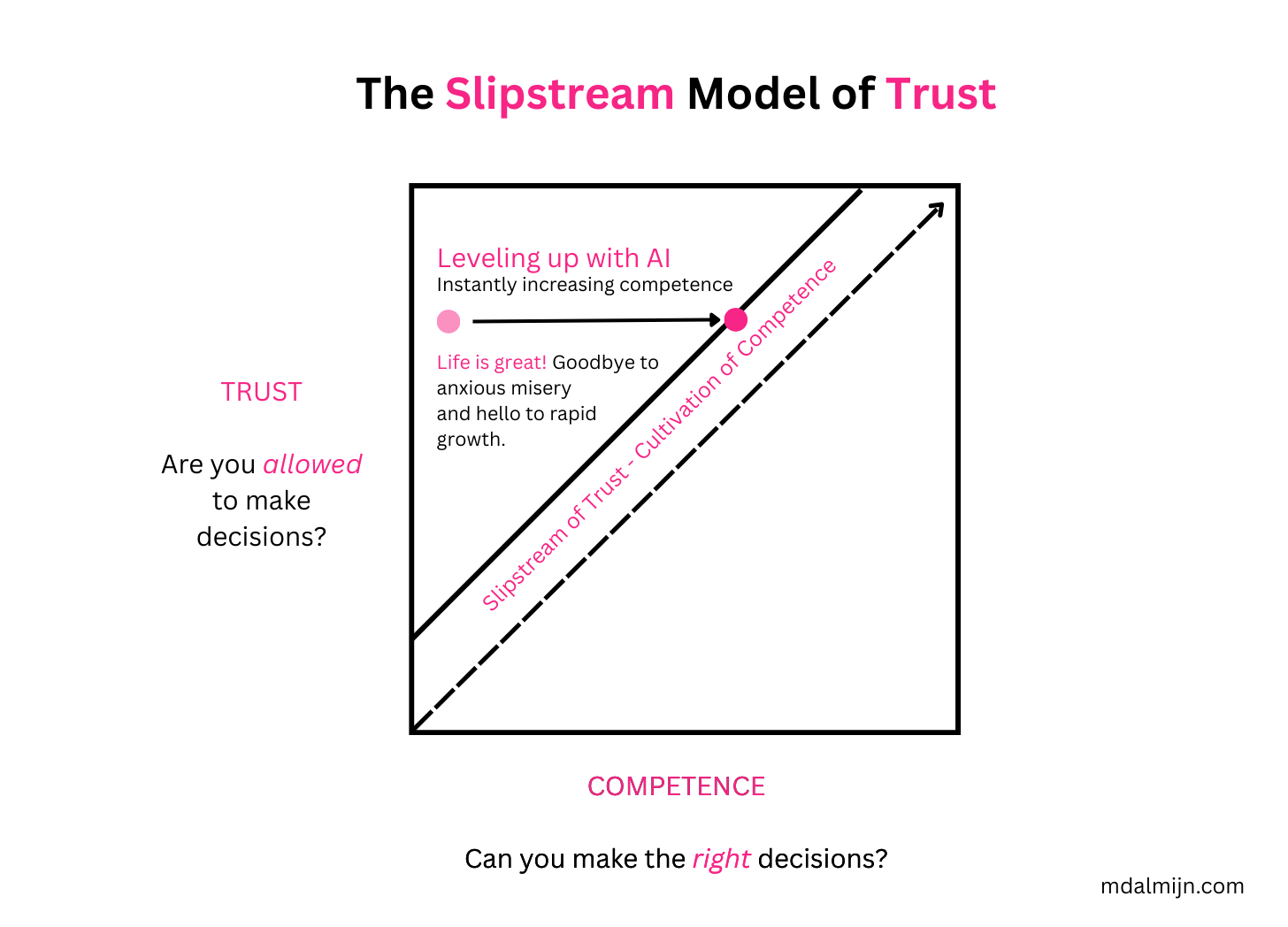

What would happen when we introduce AI in this environment with a high amount of Trust? Let’s wave the AI magic wand we also used in part I again.

The same caveats apply: AI isn’t good enough (yet) to massively increase our level of Competence and it’s impossible to instantly increase Competence because that will be something gradual. It’s still a valuable thought experiment to show what will happen.

Let’s explore the ramifications of instantly leveling up Competence with AI using our trusty old friend the Slipstream Model of Trust once again:

Life would be great! I would no longer be anxious and miserable, and I would enter the Slipstream of Trust, where I enjoy slightly more trust than I deserve based on my level of competence.

The Slipstream of Trust is where you can safely grow Competence and Agency. You have enough freedom to experiment grow and learn, but not so much freedom that it feels like you’re nearly driving off a cliff.

We can explain the Slipstream of Trust s using an example from the world of racing. A racer who is in the slipstream of another driver will move faster. Once you become to too far removed from the slipstream you will encounter dirty air that increases drag and will make you move slower.

The Slipstream of Trust is the place where you want to be. It’s the perfect area of Proportional Trust: Enough freedom to make decisions and not too so much freedom that you’re at risk of majorly screwing things up.

Let’s say you’re convinced by my argument and that Trust is the key factor we should focus on first. Imagine you’re in an environment where your employees are being Trustcapped.

To address the issue of high Trustcapping, you decide to drop AI for now and splurge your employees with Trust. You suddenly believe that only if we wrap our employees tightly with a snug little blanket of Trust, then we can be safe from the impending AI meteor.

That’s not the solution either, allow me to explain why.

Splurging With Trust is Dangerous

Would you let a 18 month year old cross the road who just learned how to walk?

Competence: can they make the right decisions regarding crossing the street? HELL NO! A child of 18 months old is close to wholly incompetent when it comes to crossing roads. They are unable to make the decision whether it’s safe to cross and even if they could make a sound decision, since they just learned how to walk, there’s a good chance they’ll trip in the middle of the street as they’re crossing.

Trust: would I allow them to make the decision of crossing the road by themselves? Absolutely not - PERMISSION DENIED.

So what I’m going to do instead of trusting them to cross the road on their own? I’m going to hold their hands and cross the street together. I’m giving the 18 month year old Proportional Trust: a level of Trust that is appropriate for their level of Competence.

I’m basically holding their hand and creating an environment of Proportional Trust by riding the Slipstream of Trust together.

It’s absolutely crucial that employees receive Proportional Trust. Both too much Trust and too little Trust for their level of Competence hurt Agency.

Ideally you enjoy slightly more Trust than you deserve based on your level of Competence, because that’s the ideal environment for safely growing your Agency.

The bandwidth of the Slipstream of Trust is mostly determined by two things:

Acceptable risk levels. How much risk is acceptable? A start-up vs. an established bank with millions of customers has a totally different risk profile.

Psychological Safety. How safe do people feel to take risks and make mistakes without fear of repercussions?

The lower the risk, the higher the acceptable risk, and the higher the psychological safety, the wider your Slipstream of Trust can be.

Trust Is More Important Than AI

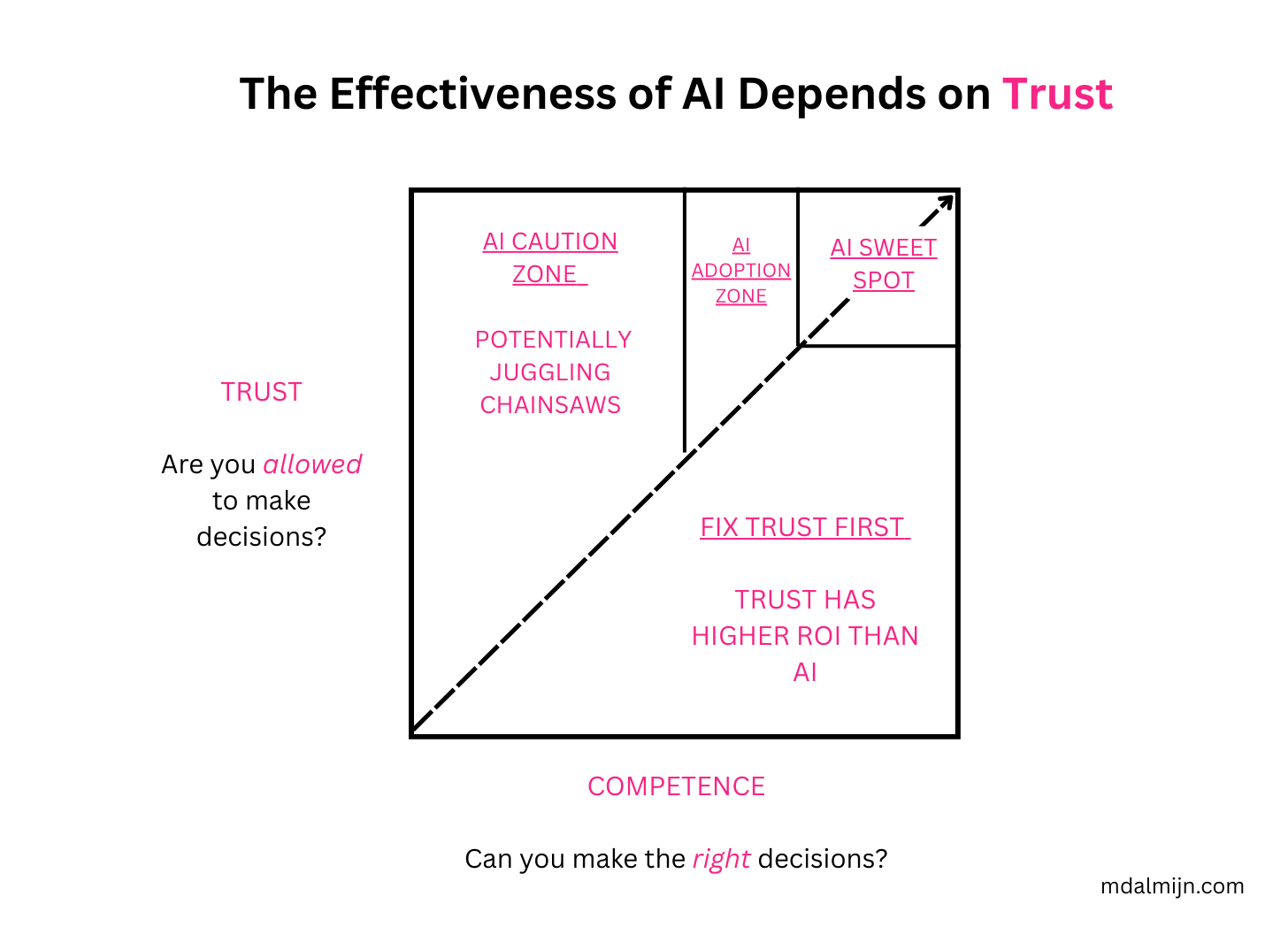

If you want to know whether it makes sense to upskill with AI, it’s far more important to map your current situation on the model below, because depending on where you are, AI won’t change a damn thing.

Let me explain the different zones in the model:

AI Sweet Spot. Employees with high Agency: high Competence, high Trust and high Psychological Safety will benefit the most from AI. They know what they’re doing and they know the risks they’re taking. This is the place you want to be if you want to get the most value out of AI.

AI Adoption Zone. When your employees enjoy mid or slightly higher Agency and they’re not Trustcapped, you should adopt AI.

AI Caution Zone. When your employees have low to mid Agency, and they receive an appropriate level of Trust because they’re not Trustcapped, it makes sense to adopt AI, but you should proceed with caution. Make sure they work together with more senior people and ride the Slipstream of Trust, as they’re potentially juggling chainsaws and AI does not have the judgement yet to prevent this from happening.

Fix Trust First. If your employees are being Trustcapped and they don’t have High Agency, then the ROI of increasing Agency by improving Trust and Psychological Safety is far greater than adopting AI. In fact, if you adopt AI too soon, it might even decrease the level of Agency. Increasing Competence with AI when they’re already being Trustcapped is a recipe for frustration and even further diminished Agency.

In a nutshell:

If you’re below the middle line, unless your employees have high Agency, you shouldn’t be leveling up your employees with AI, you should work to increase Trust and Psychological Safety so that you can get all their current competence without AI on the road.

If you’re above the line, Trustcapping isn’t a problem anymore, but you should create the conditions for Agency to grow so you can benefit even more from your existing expertise with AI.

Once you’ve addressed the Trustcapping, only then should you worry about gradually increasing Competence even further with AI.

Most organizations are in a situation where they are Trustcapping their employees. All implementing AI will do, is only serve to frustrate them even further and make them appear as even more incompetent.

If you want to upskill with AI, the best area to be is or near the Slipstream of Trust - where you enjoy slightly more Trust than your level of Competence. The bandwidth of the Slipstream is determined by the acceptable level of Risk and the level of Psychological Safety.

When you’re in the Slipstream of Trust: as you gain more Competence, you will get more Trust and as you gain more Trust you can gain more Competence, which ultimately will allow to develop the high Agency environments that will benefit the most from AI.

I want to stress, we need inexperienced people with low Agency more than ever. Once those low Agency employees reach high Agency they will be able to do things with AI that your current best employees will never be able to do.

Most Organizations Are Black Holes For Employee Brilliance

Most organizations are black holes for their employees — devouring brilliance and leaking frustration - because they prevent them from making the right decisions through Trustcapping.

According to Gallup in 2025, roughly 21% of employees is engaged at work, which means 79% is not engaged or even actively disengaged. ‘Not Engaged’ is an euphemism for people that are ‘Checked Out’ They don’t really care what happens in your organization.

There is a silver lining and the whole situation regarding Engagement and Competence isn’t as depressing as I’ve portrayed: The best teams rarely have the best individuals.

Read that sentence again and let it sink in.

I’ve even experienced the opposite: the worst teams I’ve worked with had the most competent people I’ve ever worked with.

The best teams I worked with weren’t packed with geniuses or industry veterans.

Companies don’t need better individuals, they need better teams with more Agency.

High Trust organizations with high Competence and high Psychological Safety will benefit the most from AI, because those are the only companies where employees can exercise their Agency to get the most out of AI.

If your teams don’t already have Agency then adding AI won’t matter much. Your Competence is already capped without AI. There is a huge opportunity for many companies to increase Agency, Competence and Trust through riding the Slipstream of Trust.

That’s what we should be working on, as only then we can truly reap the benefits AI promises to offer.

Special thanks to Robert Kooloos, Ujjwal Sinha, Tanner Wortham, Koen Muurling for their feedback.

The term you've coined 'Trustcappin' leaves an impression, though I could notice the two-sided playing field by rulesetting expectations and measuring productivity in quantifiable factors. There's a certain level of attrition when it comes to promoting or "upskilling" within a job position; both in terms of increased workload as well as additional benefits. The graphics seem to perfectly capture what key decision-taking processes happen from a cognitive perspective.